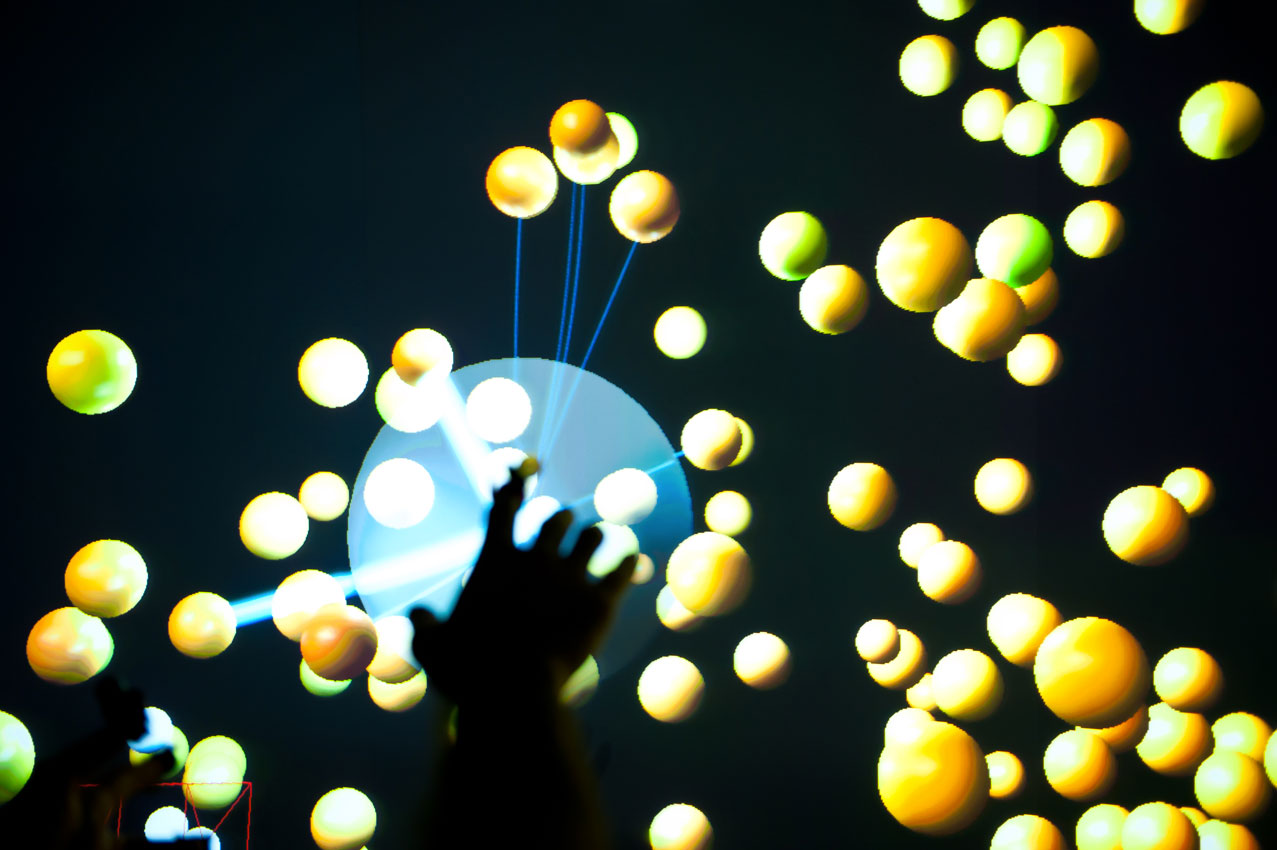

Virtual Reality allows entering a synthetic world where space and time can be manipulated according to the laws and the stimuli designed by the programmer. This installation aims at exploring this concept, focusing on the multimodal nature of VR. While immersed in the virtual environment, the user is free to travel through an audio/visual world where fragments of recorded sounds are physically scattered around the space. Using direct interaction, the fragments [called audio units] can be moved and triggered, breaking their rigid time order, to seek the creation of novel arbitrary sound paths in a multi-dimensional space.

In vrGrains prerecorded sounds and live audio inputs are chopped into units and scattered all over an empty virtual environment, under the shape of small spheres. Each sphere is characterized by a physical behavior [spring-mass system parameters] and by a color, which depend on the results of a real-time audio feature analysis. The user is free to move within this environment, rearrange the physical position and trigger the audio units just using hands and fingers. The right hand index can be used to move singular units, changing their speed and acceleration, producing, in this way, collisions. Each time two or more units collide, their audio content is automatically triggered. Also, the right index is surrounded by a transparent globular field. All the units inside this field are randomly and continuously triggered. Using specific poses on the left hand, it is possible to change the behavior of the globular field on the opposite hand. The user can change the radius of the globular field, rigidly move together a set of units, create an attractive field, make units orbiting around the right hand and revert them all to their original position. All of these interaction modalities are enriched by the use of shaders, animated lights and particle systems.

The installation is projected onto a Powerwall via a couple of active stereoscopic projectors. Users’ hands and fingers are tracked using a set of 12 infrared mocap cameras, detecting the position of lightweight passive reflective markers. The user’s head is tracked to correct the display perspective throughout an ultrasonic inertial tracker. Each finger is discriminated from the others and poses are recognized thanks to a custom indexing algorithm wrapped into a C++ library.

The virtual environment is designed and animated in Unity3D; all the graphic effects are achieved through shaders. The stereoscopic rendering is achieved using MiddleVR, a plugin which permits Unity to have access to quad-buffer OpenGL rendering capabilities.

Unity communicates via OSC to an audio engine coded using Max/MSP and the FTM&Co package. The used technique is called Corpus Based Concatenative Synthesis.

![Hybrid Reality Performances [2009-2012, Ph.D. Thesis ]](https://toomuchidle.com/wp-content/uploads/2014/07/VR_sculpting-150x150.jpg)